Suggerimenti e trucchi per eseguire un A/B test di successo

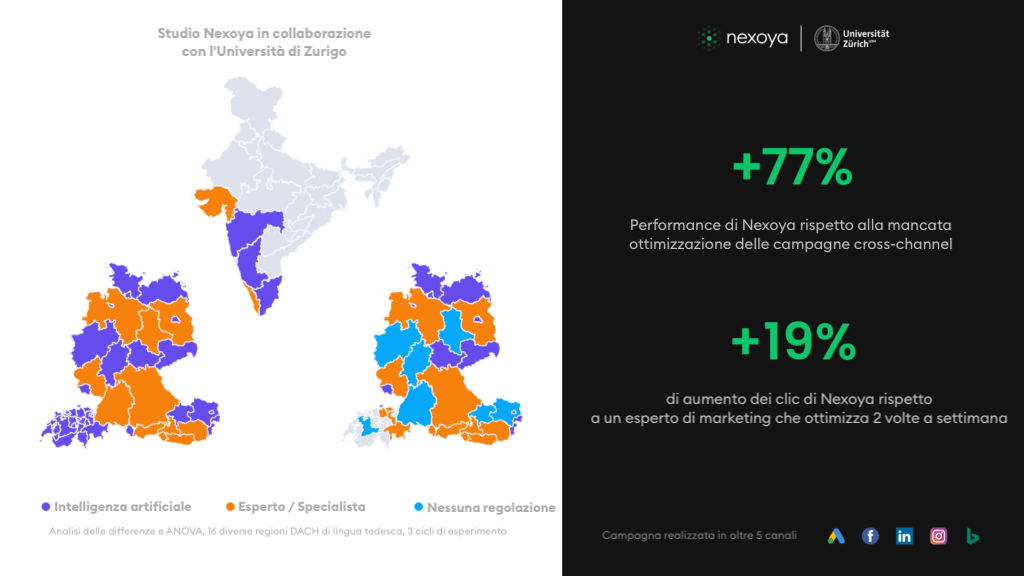

Condurre esperimenti sul campo non è così facile come potrebbe sembrare. Sulla base dell’esperienza di un ampio studio condotto per 8 mesi in collaborazione con l’Università di Zurigo, le intuizioni più importanti sono riassunte di seguito. Questo studio faceva parte di un progetto più ampio di ricerca dell’UZH.

Nell’odierno panorama del marketing digitale, variegato, complesso e veloce, può essere una sfida enorme capire esattamente che tipo di impatto abbiano le tue campagne marketing sui risultati.

Le vendite sono aumentate grazie alla nuova campagna display appena lanciata o perché il lancio della campagna è coinciso con qualche altro fattore, come un evento stagionale come la Pasqua o, prendendo come esempio il 2020, un lockdown legato a una pandemia.

Naturalmente, con l’esperienza arriva anche la conoscenza, quindi probabilmente siete in grado di riconoscere l’interazione tra i vari canali marketing e i fattori esterni, ma cosa succede se state testando una nuova soluzione di marketing o se state utilizzando una tecnologia innovativa?

A questo punto entrano in gioco gli A/B test. Sono un elemento fondamentale del kit di strumenti di un marketer e un modo eccellente per capire quale sia l’impatto di una particolare area della vostra attività di marketing sui vostri profitti, con la certezza che gli effetti osservati non siano dovuti al caso.

Per la collaborazione di Nexoya con l’Università di Zurigo, questo è esattamente ciò che abbiamo fatto.

Per la sua tesi di laurea magistrale, Flavia Wagner voleva capire come l’utilizzo dell’IA rispetto agli esperti di marketing influisse sui risultati complessivi. Nexoya era la soluzione perfetta da mettere alla prova: utilizziamo l’IA per ottimizzare i budget di marketing cross-canale e fornire l’allocazione ideale della spesa per ottenere le migliori prestazioni, più efficienti dal punto di vista dei costi, su base dinamica e continua.

L’ipotesi di Wagner era semplice: l’ottimizzazione basata sull’IA ha risultati migliori rispetto ai budget di marketing ottimizzati manualmente da un esperto di marketing?

Poiché questo esperimento era destinato a una tesi di laurea magistrale che sarebbe stata sottoposta a una severa revisione, era estremamente importante che i risultati fossero in grado di resistere all’esame statistico. Lo standard di riferimento sono gli esperimenti randomizzati sul campo che forniscono stime affidabili e generalizzabili dell’effetto. Applicando molteplici analisi statistiche ai dati raccolti nel corso di un semestre, Wagner ha potuto concludere che l’ottimizzazione del budget guidata dall’intelligenza artificiale di Nexoya ha prodotto il 19% di clic in più rispetto all’esperto di marketing e il 77% in più rispetto al gruppo di controllo.

Grazie al test A/B, siamo stati in grado di dimostrare in modo definitivo l’impatto del nostro strumento di marketing sui risultati delle campagne.

Quindi, senza ulteriori indugi, ecco i nostri suggerimenti e trucchi per l’impostazione del A/B test :

Includere un gruppo di controllo

- Avere una base di attività durante la quale non vengono apportate modifiche significa poter garantire che non ci siano fattori esterni che influenzino i risultati in uno dei gruppi di test e non nell’altro. In ciascuno dei nostri due cicli di esperimenti, abbiamo fatto funzionare le nostre campagne per 7 giorni senza apportare modifiche prima di iniziare l’ottimizzazione. Nel secondo esperimento abbiamo avuto anche un gruppo di controllo in funzione per tutto il tempo, per vedere come Nexoya e l’esperto si sarebbero confrontati con una campagna di marketing senza ottimizzazioni di budget. In questo modo è stato possibile effettuare un’analisi dei risultati per differenza.

Siate meticolosi nel suddividere i gruppi di test

- È possibile suddividere i gruppi di test in molti modi diversi, ma è fondamentale prendere in considerazione tutti i fattori che possono influenzare i gruppi e controllarli. Inoltre, è importante che non vi siano interazioni o sovrapposizioni tra i gruppi. Negli esperimenti di Nexoya per UZH, i gruppi sono stati divisi per regioni geografiche. Per garantire che le dimensioni e gli interessi dell’audience fossero il più possibile uguali tra i blocchi geografici, li abbiamo definiti in base a fattori quali la popolazione, il PIL, l’interesse dell’audience (ad esempio, il numero di aziende tecnologiche rilevanti per il nostro prodotto) e i volumi di ricerca.

Avere una potenza statistica sufficiente

- Per garantire che i risultati siano statisticamente validi, è molto importante avere una potenza sufficiente. Il nostro test A/B è stato condotto in quattro blocchi geografici distinti, in cui i budget sono stati ottimizzati come campagna individuale, in modo da avere 4 gruppi per trattamento. Questo ci ha permesso di aumentare la potenza per ottenere risultati statisticamente significativi. La regola generale è: più dati ci sono, meglio è.

Controllo del maggior numero possibile di fattori durante la configurazione

- Questo può sembrare abbastanza ovvio, ma quando si gestiscono più campagne su più canali, a volte le cose passano inosservate. È fondamentale che se si apporta una modifica a una campagna nel gruppo A (come il segmento di pubblico a cui ci si rivolge), la stessa modifica venga replicata nel gruppo B. Altrimenti, è estremamente difficile dire che, ad esempio, l’ottimizzazione del budget è stata la ragione per cui un gruppo ha ottenuto risultati migliori dell’altro. Quando si controlla con attenzione questo aspetto, è molto più chiaro quale sia l’impatto effettivo sulle prestazioni.

Utilizzare modelli statistici appropriati

- I marketer spesso guardano ai risultati di un test A/B nel loro formato grezzo (ad esempio, il gruppo A ha registrato 400 conversioni e il gruppo B 500, quindi il gruppo B ha ottenuto risultati migliori), ma l’esecuzione di un’analisi statistica sul test vi darà risultati molto più solidi. Per questo motivo, è importante scegliere i modelli giusti da utilizzare per l’analisi. Nel nostro caso, per analizzare i risultati abbiamo utilizzato la Difference-in-Differences e l’ANOVA. L’inclusione di fattori aggiuntivi nell’analisi, come il denaro speso, il giorno della settimana e gli effetti fissi regionali, migliora ulteriormente l’accuratezza dei risultati.

I test A/B sono in definitiva un modo molto potente ma relativamente semplice per scoprire come i vari sforzi di marketing influiscono effettivamente sui risultati.

Nel nostro caso, siamo riusciti a concludere che sì, l’ottimizzazione del budget basata sull’intelligenza artificiale ha ottenuto risultati migliori rispetto alla stessa ottimizzazione eseguita da un esperto di marketing, in particolare il 19% in più.

Un ringraziamento speciale a Flavia Wagner e al Dr. Markus Meierer dell’Università di Zurigo per questa collaborazione.